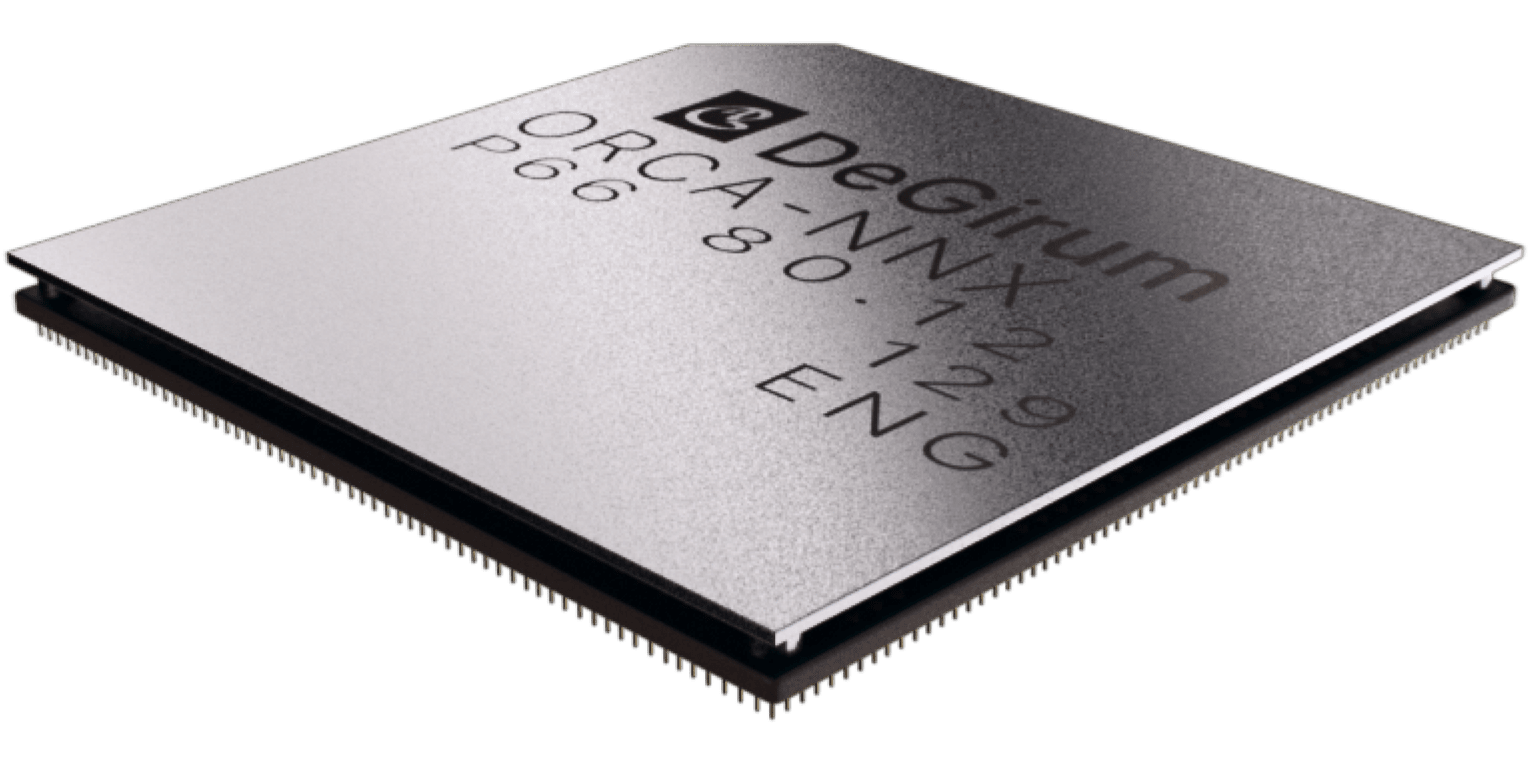

Orca Family

DeGirum® Orca is a flexible, efficient, and affordable AI accelerator IC. Orca provides application developers the ability to create rich, sophisticated, and highly functional products at the power and price suitable for the edge.

U.S.-based customers can use the Buy button.

For international orders or 10+ units, use Product Inquiry.

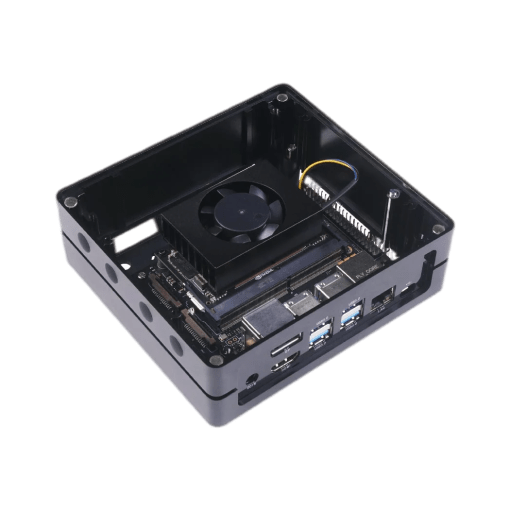

Product Line

Part / ModuleAvailability

Orca M.2 (DRAM)Q2 2023

Orca M.2 (DRAM-less)Q2 2023

Orca USB DonglesQ2 2023

Orca ASICQ2 2023

Product Inquiry